Artificial intelligence (AI) is undergoing stormy development, but as a technology it is still partly in its infancy. Experiments abound: from a "rat race" in generative AI by big-tech companies to AI-based behavioral recognition systems in supermarkets and gyms. However, managing the risks of AI systems is not moving at the same pace. For the Netherlands, this means that careful deployment must be paramount and that we must prepare for more incidents involving AI. Citizens, administrators and elected representatives must therefore be vigilant.

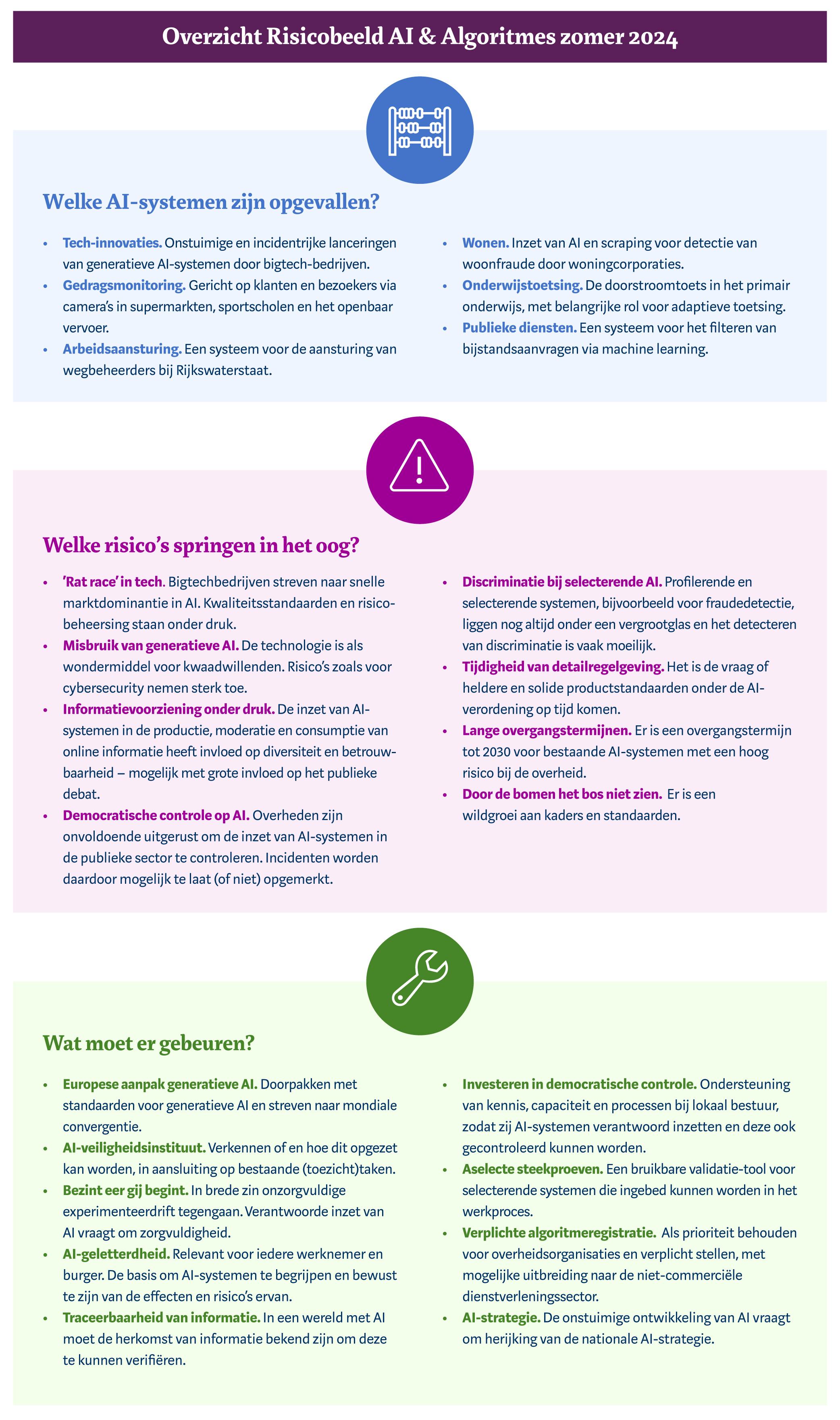

So writes the Autoriteit Persoonsgegevens (AP) in the new Report AI & Algorithm Risks Netherlands (RAN). As coordinator of the supervision of algorithms and AI, the AP analyzes the risks surrounding AI systems every six months, and advises society, companies, government and politicians on steps to take.

In the Netherlands, trust in AI is lower than in other countries. People will increasingly encounter the risks of AI systems in their daily lives. From misuse of generative AI for cyberattacks and deepfakes to new forms of privacy violations and the potential for discrimination and arbitrariness.

Aleid Wolfsen, chairman of the AP: "These remain stormy times. This is understandable with the emergence of a new systems technology that offers many opportunities, for example in medical treatment and inclusive services. But the risks are also well known. The good news is that serious work is being done on AI regulations on top of requirements that already exist in areas such as data protection, consumer protection and cybersecurity. There is also growing awareness that responsible deployment of AI is labor-intensive and requires a lot from an organization. The warning we issue is that as long as organizations are still unsure about the extent to which they know the risks of AI, they should be cautious about deploying AI.

One example of a measure that helps get a better handle on the deployment of AI systems is so-called random sampling. Many AI systems are used to profile and select people. For example, for fraud investigations. In that kind of process, random sampling helps to detect and counter discrimination.

The AP analyzed for this edition of the report among other things, the risks of AI for online information, such as news. Through the AI systems behind social media and search engines, those platforms have a lot of power over what information people get to see and what their perception of reality looks like. In addition, the rise of generative AI brings with it a high risk of misinformation and disinformation. With AI applications generating lifelike text, images, video and audio, people can no longer trust that what they see or hear is actually true.

Therefore, it is important that people understand how recommendation systems work, and that they have the opportunity to turn off or adjust the system. In addition, people should be able to check the accuracy of information. For example, AI search engines should also show the sources of answers, use AI to check whether images and videos are AI-generated, and watermark AI-generated information.

Using a survey of municipal organizations, the AP analyzed the extent to which elected representatives can have a grip on AI systems deployed by the government. The survey results show that municipal organizations still have limited oversight of their AI systems, that council members doubt whether they have sufficient knowledge of AI, and that only a few local courts of auditors sporadically investigate AI systems. It is necessary to empower municipal council members and other representatives of the people to ensure democratic control over the deployment of AI.

Finally, the AP calls on the government to continue to give undiminished priority to algorithm registration by government organizations and to consider having semi-public organizations register their algorithms as well. Because it is precisely in education, healthcare, the social rental sector and public transport, for example, that AI is deployed in situations where people are vulnerable.

It is important to avoid frameworks that are too non-committal or (unwittingly) give room for insufficiently precise or measurable standards, which sometimes lag behind or conflict with scientific insights. The AP sees the coming into office of a new cabinet as the moment to review the national AI strategy.